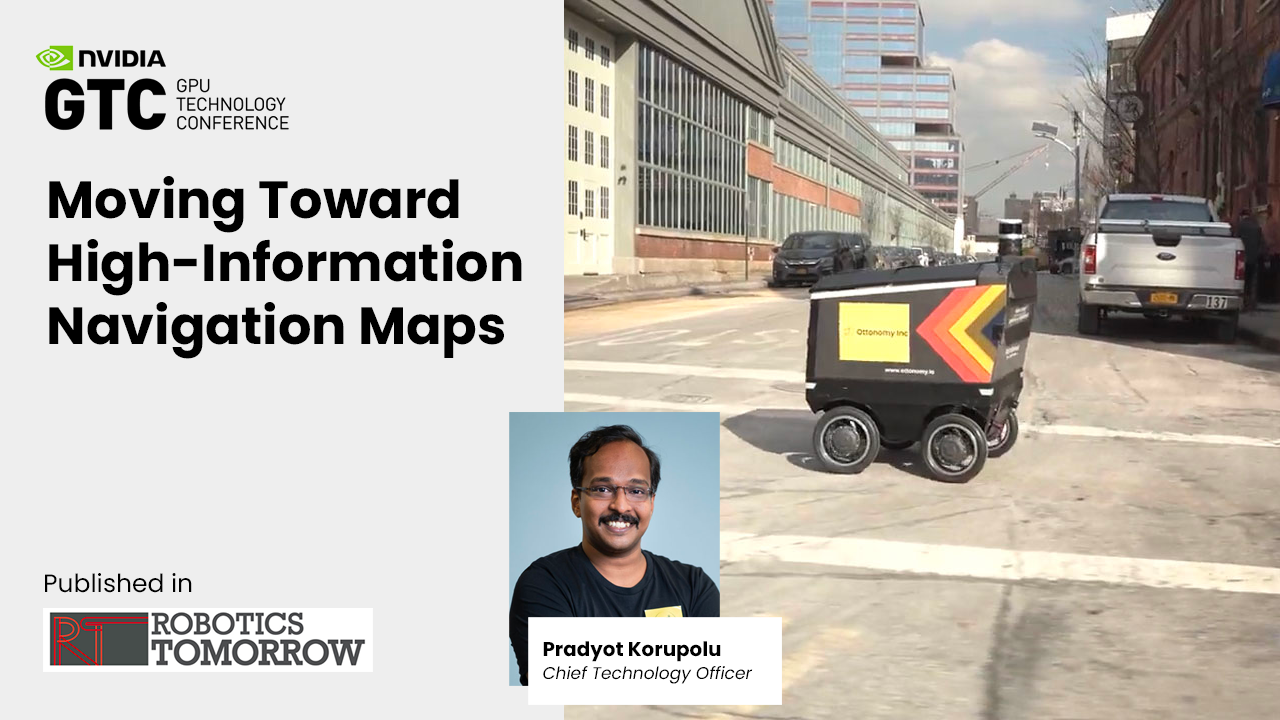

CTO and co-founder, Pradyot Korupol, introduces “high Information maps” for contextual navigation of delivery and autonomous vehicles at The Industry’s Leading AI Developer Conference

Today, CTO & Co-Founder Pradyot Korupolu of leading autonomous delivery robotics company, Ottonomy.IO, will be presenting at the NVIDIA GTC 2022 virtual conference. In his session he will discuss autonomous navigation solutions in the event’s “Automotive transportation-autonomous robotics” Industry segment, focusing on moving towards high information navigation maps.

The consumer facing retail and restaurant industry has seen a huge surge in robots operating in everyday, regular human environments (both indoors and outdoors). Ottonomy.IO has deployed robots in multiple consumer environments including airports, retail spaces and campuses.

“With a strong track record in autonomous driving technologies, our team has been able to translate principles of vehicle autonomy to near-term practical use cases for consumer environments,” says Pradyot. “Leveraging high information maps as an input to our contextual navigation engine, Ottonomy.IO has the most robust navigation suite running in live conditions for both indoor and outdoor navigation for restaurants, retail spaces and airports.”

While new use cases are on the rise, data sets are still limited. Pradyot will discuss fusion tools and techniques that Ottonomy.IO’s delivery robots use to bridge this gap, while operating safely and efficiently in crowded and complicated environments. The presentation at the conference will be in the Automotive Transportation-Autonomous Robotics track of NVidia’s 2022 conference addressing “efficient camera and lidar fusion” as a way to get high-Information navigation maps.

These high information maps achieve several objectives:

-

- Thin layer of condensed information maps useful for intelligent navigation.

- Optimized for edge hardware running the mapping and localization pipelines.

- Real-time fusion of multimodal sensors, specifically 3D lidars and RGB cameras.

- Navigation maps that combine both metric and semantic distance.

- Information of the environment as an input to Ottonomy’s Contextual Navigation.